Dark backgrounds, light backgrounds, and OCR

One of my favorite parts to build in APSE was the way it handles light and dark backgrounds. Text recognition algorithms are tuned for black letters on a white background, like a printed page:

Usually this isn't a problem, because you can invert the colors if an image has light text on a dark background. This image also scans as "hello world".

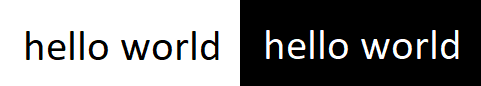

But this won't work for Apse, because your screen might have both light and dark areas at the same time. For example, a dark-themed text editor open next to your browser. This image scans as "hello world igrelifemuZeyale!"

So Apse segments the image into dark and light regions, and selectively inverts the dark areas before converting to text. With that preprocessing out of the way, we don't get junk like "igrelifemuZeyale!".

If this was interesting, you can check out Apse in action at apse.io.